When LLM users share their chatbot experiences, the results vary widely. It often seems like they’re talking about two different products.

I’ve suggested that there are two types of users: “centaurs” and “reverse.”

Centaurs are people who choose how to use a machine, like an LLM, in their work.

Reverse-centaurs are those forced to assist a machine. These workers are now managing an LLM. Their managers cut staff, and the remaining team is left supervising an LLM that can’t truly replace the workers who were let go.

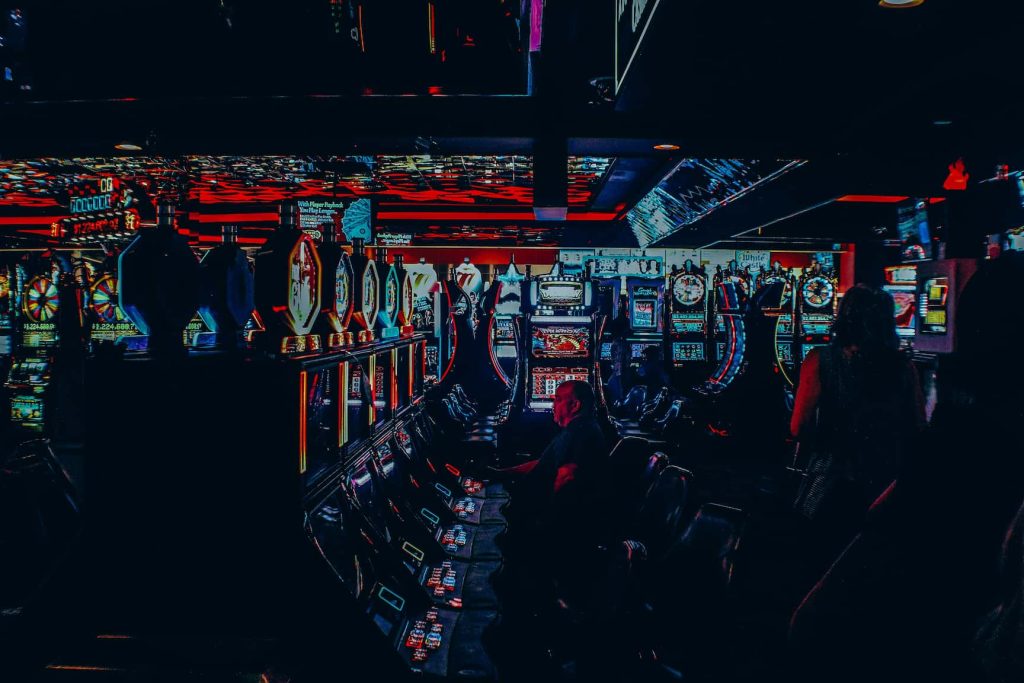

I recently found an essay by Glyph called “The Futzing Fraction.” It offered a surprisingly compelling viewpoint. It presents a different but compatible hypothesis. I find it fascinating — the same kind of mixed-experience pattern you often see when people talk about using platforms like 22Bet, where results can swing wildly depending on expectations and outcomes.

Users often think LLM coding assistants save them more time than they do in reality. Glyph compares this to a slot machine. Losing a dollar feels normal. It’s “the expected outcome.” But when a slot pays out a jackpot, you remember that for the rest of your life.

Glyph presents an idea: you ask a chatbot to code a project. At first, the code doesn’t work. But after ten minutes of tweaking, it finally does. That victory feels incredible. You’ll always remember saving 3:50 by using a chatbot. You might have gone through the “I’ll futz with this for ten minutes” step so many times that it took six hours. That’s two hours longer than it would have taken to write the program from scratch.

Glyph points out that in other business activities, the “let’s try this for 10 more minutes” method often succeeds. But with LLMs, you face an “emotionally variable, intermittent reward schedule.” This can make it hard to use that strategy effectively. You don’t see steady progress. Instead, you have unpredictable successes and long times of quiet frustration. Over time, this pattern can skew your view of productivity. You might think the tool is more helpful than it really is.

And that’s one parallel; there are other ways LLM coding tools resemble slot machines in how they influence our behavior. Reg Braithwaite suggested that AI companies operate like casinos. They charge you each time you re-prompt the AI. He writes:

When you pay by “pulling the handle,” the vendor aims to show progress, not to fix your problem with one pull.

But there’s an important difference between an intern and an LLM. Helping an intern is a smart move for a senior coder. It builds a new generation of skilled colleagues.

Conclusion

In the end, our sense of how “useful” an LLM is often comes from the emotional highs it gives us, not the actual time it saves. An impressive output feels like a win, but hours of quiet frustration fade away. This casino-style reward approach can make people overvalue AI. It can also cause them to overlook the costs of constant adjustments. The answer isn’t to shun LLMs. Instead, we should use them wisely. Let’s celebrate the successes but stay honest about the unseen effort behind them.